Parallel File Systems for Today’s HPC

A growing number of industries require high-performance computing (HPC) clusters to enable discoveries hidden in the massive amounts of data they collect. In the field of genomics, for example, researchers at Iowa State University work with hundreds of gigabytes for studies of a single genome. These scientists, working on assembling sequences of Zea mays, are dealing with multi-gigabyte genomes, but they must sequence a single genome 150X to get reliable data from it for their research. In another example, for climate models used in weather prediction, the high resolutions of today’s simulations can generate as much as 100 terabytes of data, according to a “Journal of Advances in Modeling Earth Systems” report by Michael F. Wehner and others.i Analyses from high resolution seismic data similarly can rely on incredibly large data sets of over 100 terabytes in a single file.

To reliably process this magnitude of data in a reasonable amount of time using HPC, very few storage architectures can keep up with the computing systems enterprises and academia use. The solution is usually to deploy a parallel file system (PFS) with I/O capable of hundreds of gigabytes to several terabytes/second. The majority of parallel file systems being deployed today are IBM’s Spectrum Scale* (formerly called General Parallel File System*, or GPFS*) and open source Lustre*. These two solutions present completely different purchasing models for organizations looking to deploy a high-performance data platform for massively large data sets. Lustre, being a community-developed and open source licensed model, can create significant value for high-performance data solutions.

A Little PFS History

Spectrum Scale (or GPFS) was developed at IBM in the early 1990s and finally commercialized at the end of that decade. It can be deployed in different distributed parallel modes and is used in large commercial and supercomputing applications. It began its life on IBM’s AIX systems, but has since appeared on Linux* and Windows Server* systems.

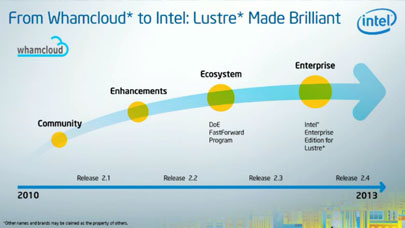

Lustre’s architecture began as an academic project at Carnegie Mellon University in the late 1990s. The file system was developed under the Accelerated Strategic Computing Initiative (ASCI) as part of a project funded by the U.S. Department of Energy that included Hewlett-Packard and Intel. (ASCI drove the creation of the first teraflop supercomputer, the Intel-based ASCI Red installed at Sandia National Labs in 1996.) Today, Lustre development continues under an open source model, with releases managed by OpenSFS*. Intel Corporation contributes the majority of code enhancements to Lustre, while also adding its own set of unique features in several Intel-branded versions that make it more desirable for enterprise and cloud applications and deployments.

Partnerships for Success

High-performance storage solutions capable of serving today’s petascale and tomorrow’s exascale computing are complex and large systems with tens of thousands of spinning or solid-state drives. These are not typically something an in-house IT department will tackle alone. Organizations usually seek out a partner with expertise in the software, hardware, and overall requirements for scalability, optimization, configuration, networking, backup, and disaster recovery. And therein lies one of the critical decisions companies face in finding their high-performance data solution—to go with a closed-source, fee-based licensing solution with potential vendor-locked-in or expand their choices and flexibility for the system makeup that incorporates both hardware neutrality, so purchasers can choose from a wide variety of manufacturers and technologies, and an open source licensing model with Lustre.

Many customers choose a proprietary-based solution from enterprises that have strong reputations of expertise, reliability, and trust. But, in today’s marketplace, dollars are short and competition is fierce. And choice is a key ingredient that makes the economics of technology efficient, successful, and cost-effective. New company entrants in high-performance data solutions designed around Lustre have been making serious inroads to the enterprise storage market for several years.

There is a wide selection of smaller yet equally competent technology integrators around the world who have developed storage solution expertise with Lustre, while delivering a level of personal attention and instant response to customers that rivals the big and expensive enterprises. Most of these integrators are part of Intel Corporation’s Lustre reseller program, which numbers more than 20 companies. They can offer highly competitive solutions around Lustre.

Adding Value through Open Source

Open source has been the mode of enterprises deploying cost-effective solutions built on reliable, performant software for decades. Linux is the open source poster child, and Red Hat is the standard bearer for building a profitable enterprise business around Linux by providing enterprise-grade services along with its own productized enhanced offerings around the Linux kernel, while continuing to contribute to the community’s development tree. Other companies, like Novell with their SUSE distribution, have followed the same model.

When Lustre entered the open source community, several organizations began to form that wanted to maintain Lustre’s traction and momentum in HPC. They also grasped the incredible potential of Lustre for both academia and enterprise. So, they began building on the same open source model: contributing to the code while offering enhanced product and support offerings around the software. This was good for enterprise, because, similar to the expansion of Linux into the enterprise, company IT managers

wanted the assurance of support and longevity behind Lustre before committing critical applications to the file system.

“Intel is the clear leader in the Lustre Open Source project,” according to Brent Gorda, Intel’s General Manager of their High Performance Data Division (HPDD), the group responsible for Lustre at the company. “We are the chief contributors to the Lustre code and have the largest pool of Lustre experts in the community. Building on the open source version, we offer enterprise-class and cloud versions of Lustre with Intel software enhancements and enterprise-grade services to customers. In this way we are adding value to open source Lustre and helping commercial HPC easily take advantage of the fastest scalable parallel file system on the planet.”

According to Gorda, Lustre eliminates vendor lock-in and enables more choices to customers. If they choose to use a technology partner for their high-performance data solution, they can RFP across many vendors to obtain the optimum hardware technology at the best value, and use Lustre as the software. This way, they avoid the often steep and changing license fees that go along with proprietary solutions, while still getting the support expertise they need.

Lustre in Leading Installations

Lustre is the high-performance file system of choice for many large installations, including Lawrence Livermore National Laboratory’s Sequoia supercomputer. Core to the HPC clusters at San Diego Computing Center (SDSC) at the University of California, San Diego is Data Oasis, a scalable, Lustre parallel file system with up to 12 PB (petabytes) of capacity and exceeding 200 gigabytes/second while supporting 10,000 simultaneous users. Designed and deployed by Aeon Computing, also of San Diego, the 72-node system is linked to SDSC’s Trestles, Gordon, TSCC, and Comet clusters. Data Oasis’ sustained speeds mean researchers can retrieve or store 240 TB of data in about 20 minutes. Early this year, Data Oasis began undergoing significant upgrades, including ZFS, a combined file system originally designed by Sun Microsystems and mated in a new hardware server configuration under a partnership between SDSC, Aeon Computing, and Intel.

Game Changing Offerings

As the core to today’s Open Source, high-performance, scalable data storage solutions, Lustre offerings, with Intel enhancements and support, present a valuable alternative to closed-source, proprietary solutions. Thus, Lustre has been a game changer for high-performance data storage systems in academia and enterprise HPC. And Lustre continues to undergo critical enhancements that enterprise wants, with releases managed through OpenSFS and the Lustre community.

Intel’s Lustre offerings include Intel Enterprise Edition for Lustre software, Intel Cloud Edition for Lustre software, and Intel Foundation Edition for Lustre software. Each is designed to meet specific needs in the marketplace. The Enterprise Edition brings together powerful tools and features in the latest Lustre release that enterprise IT has been requesting to make it easy to deploy, to significantly expand the number of metadata servers, increase reliability, include hierarchical storage management, and allow it to interface with Hadoop workloads. Support is available directly and through Intel resellers, giving companies greater choice without sacrificing the ease-of-manageability and support they want in a storage solution. The Cloud Edition, available through the Amazon Web Services Marketplace, allows customers to stand up a scalable parallel file system in minutes for any number of applications a customer wants to run on Amazon’s Elastic Compute Cloud (EC2). This open source approach from the Lustre community and from companies that are building operations around the software, add considerable value to customers needing high-performance storage solutions today.

About the author

Ken Strandberg is a technical story teller. He writes articles, white papers, seminars, web-based training, video and animation scripts, and technical marketing and interactive collateral for emerging technology companies, Fortune 100 enterprises, and multi-national corporations. Mr. Strandberg’s technology areas include Software, HPC, Industrial Technologies, Design Automation, Networking, Medical Technologies, Semiconductor, and Telecom. Mr. Strandberg can be reached at ken@catlowcommunications.com.

More around this topic...

In the same section

© HPC Today 2024 - All rights reserved.

Thank you for reading HPC Today.