The latest Top 500 was released today and NVIDIA is proud to announce that its new DGX SATURNV is ranked the world’s most efficient supercomputer, and the 28th fastest overall.

The supercomputer is a 3.3 petaflop cluster of the recent DGX-1 powered by new Tesla P100 GPUs. This powerful setup delivers 9.46 gigaflops/watt which is an impressive 42 percent improvement from the 6.67 gigaflops/watt delivered by the most efficient machine on the last Top500 list released in June.

In this efficiency race, NVIDIA is followed by the Piz Daint supercomputer from the Swiss National Supercomputing Centre with a rating of 7.45 gigaflops/watt. The Piz Daint is also an interesting case because not only it is efficient, but it is also the only supercomputer that maintained its 8th position thanks to NVIDIA and a remarkable 3.5 petaflop upgrade also based on Tesla P100 GPU.

For Roy Kim, Accelerated Data Center Computing Director at NVIDIA “That efficiency is key to building machines capable of reaching exascale speeds – that’s 1 quintillion, or 1 billion billion, floating-point operations per second. Such a machine could help design efficient new combustion engines, model clean-burning fusion reactors, and achieve new breakthroughs in medical research.” He added: “GPUs – with their massively parallel architecture – have long powered some of the world’s fastest supercomputers. More recently, they’ve been key to an AI boom that’s given us machines that perceive the world as we do, understand our language and learn from examples in ways that exceed our own”.

“Using GPUs to Design GPUs”

But NVIDIA is not only building its own supercomputer just for the record. They are really about using this power: “We’re convinced AI can give every company a competitive advantage. That’s why we’ve assembled the world’s most efficient – and one of the most powerful – supercomputers to aid us in our own work” said Roy Kim.

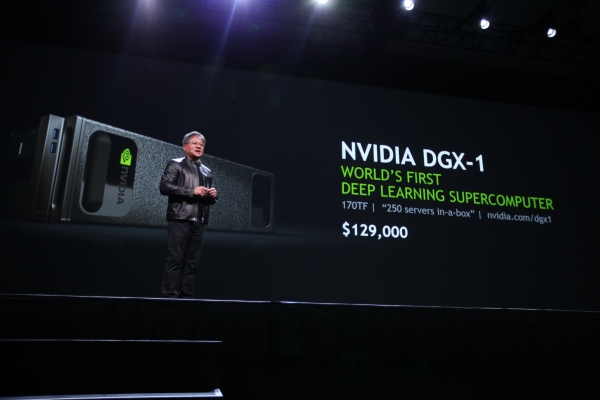

The SATURNV was assembled by a team of a dozen engineers using 125 DGX-1s, which means that at retail price you will need around 16 million dollars to build a similar cluster (the DGX-1 is available for $ 129,000 but for this quantities you might get a discount). This supercomputer will have several uses for NVIDIA. It will help the company building the autonomous driving software included in the NVIDIA DRIVE PX 2 self-driving vehicle platform. It is also used to train neural networks in order to understand chipset design and very-large-scale-integration, so engineers can work more quickly and efficiently. “Yes, we’re using GPUs to help us design GPUs” said Roy Kim who is obviously not afraid of opening the Pandora’s box. Finally, SATURNV’s power will also be used to train and design new deep learning networks quickly.

AI: the future of supercomputing

NVIDIA believes that such systems can unlock the power of AI for enterprises, research groups, and academia. The DGX-1, the real star behind the SATURNV is an appliance we’ve already talked about and which t integrates deep learning software, development tools and eight Tesla P100 GPUs — based on the new Pascal architecture — and packs computing power equal to 250 x86 servers into a device about the size of a stove top.

The DGX-1 has already been adopted by teams looking to harness AI in a wide variety of settings.

Following are some examples provided by NVIDIA:

- Enterprise software giant SAP is using DGX-1 AI supercomputers to build machine learning solutions for its 320,000 customers.

- Researchers at groups such as Open AI, Stanford and New York University are using DGX-1 for their cutting-edge work.

- Startup BenevolentAI uses DGX-1 as part of its effort to accelerate drug discovery by using deep natural language processing, machine learning and AI to formulate new, usable knowledge from complex scientific information.

“We’re confident AI and DGX-1 will play a key role in even more breakthroughs to come” concluded Roy Kim, an assertion we are inclined to believe since within a few months the DGX-1 already appears as a technological success.

© HPC Today 2024 - All rights reserved.

Thank you for reading HPC Today.